WEEK 1

1. Who's Who in Localization?

A major part of the class this week was to identify who does what in a typical localization request on the vendor side.

News for me, this part emphasized that quality is influenced by many roles, including those not traditionally labeled as “QA.” Sales influences quality by setting expectations and validating feasibility. Project managers influence quality through planning, communication, and vendor coordination. Linguists and reviewers directly execute quality-related tasks, but they do not operate in isolation. Before this class, to be honest, I often associate quality ownership too narrowly with execution roles, rather than seeing it as distributed across the workflow.

2. Quiz Reflection

The short quiz associated with this module exposed some gaps in my understanding of role boundaries. Reviewing this mistake reinforced an important lesson. From a quality management perspective, quality risks are often introduced before a project even reaches production. Intake, feasibility checks, and expectation-setting are quality-critical moments, even though they occur outside traditional QA processes. This correction reminded me that quality management starts earlier than we normally assume.

3. Who Is Responsible for Quality?

While Harry did not provide a definitive answer to this question in class, based on my own experience, I came away with the view that while quality-related tasks can be distributed, responsibility itself cannot be fully delegated. No single role can absorb total accountability, but quality also cannot be treated as “everyone’s job” in a vague sense.

Instead, responsibility for quality shifts across stages of the workflow. Different roles carry greater responsibility at different points, depending on where quality risks are most likely to be introduced.

WEEK 2

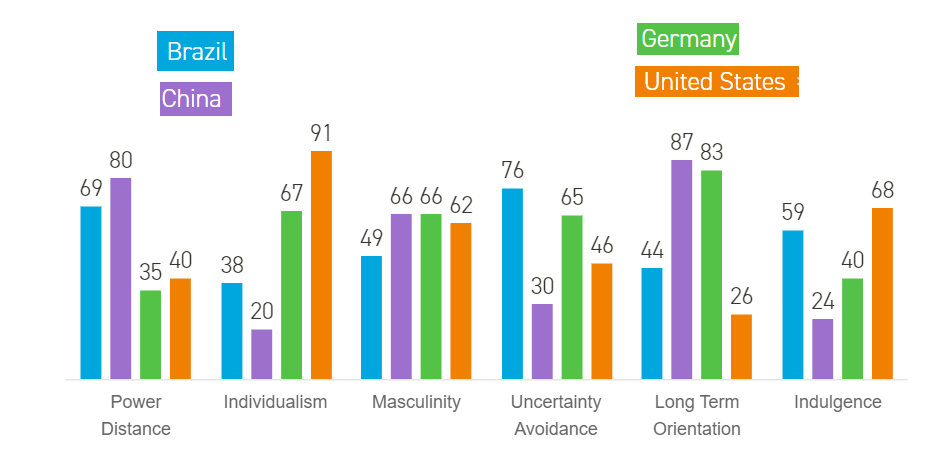

1. Quality Depends on Where You Sit

Harry shared an observation from our Week 1 homework which I found interesting and never had a chance to think before: when people describe quality as end users, they tend to describe it emotionally. When people describe quality as someone in the supply chain or production flow, they become more objective and requirement-driven.

True. Different people care about different quality aspects. Somethings are simply assumed by users, like safety, even if they do not list it as a “quality criteria”. If a client is not the final user of the localized product, their quality definition may be indirectly shaped by business goals, timelines, or internal processes rather than actual user experience. That creates a risk: we might satisfy the client’s stated expectations but still miss what our users actually need.

2. The Client Is Not the Final User, So “Quality” Has an Extra Layer

E.g., App or product UI localization where the company is the buyer but not the reader, versus cases like legal or internal documents where the buyer is also the user.

This matters because it changes how I interpret client feedback. When the client is not the end user, “quality” can sometimes mean internal usability, risk reduction, or operational fit. If I only chase linguistic perfection, I might miss what the business actually considers a successful outcome.

3. The Quality Guru

The core content of Week 2 was built around the pre-class video (https://www.youtube.com/watch?v=d7qpjsRbg6c) and the idea that quality has multiple definitions.

This was the first time in the course where “quality” stopped being a vague value word and became a practical negotiation problem. If quality can mean “fit for purpose” and also “free of defects,” then the real work is figuring out which one is driving decision-making for this specific project.

4. Turning “Quality” Into Follow-Up Questions

A big part of the class was asking: if a client says “I want a high-quality translation,” is that statement helpful. The answer was basically “not really,” unless we translate it into more clarified questions.

For example, asking directly about tone or narratives can backfire because the client may not be a linguist, or may expect us to infer tone from the source; Timeline expectations need to be specific, not vague ASAP, and PMs should build buffer rather than passing deadlines verbatim downstream.

WEEK 3

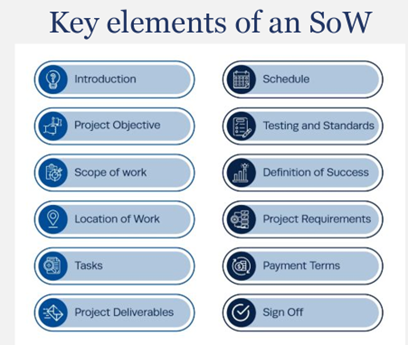

1. The SoW as the 1st Documented Quality Guardrail

A central idea this week was treating the Statement of Work as the first documented quality guardrail.

The SoW is the structured version of the clarified client request.

Even though a full SoW may contain many elements, the minimum version in localization must clearly state what is to be done, by when, and for how much.

From a quality perspective, however, the more important question is how different sections of the SoW map to quality requirements.

If quality means fit for purpose, then scope and objectives matter. If quality means free of defects, then testing and standards matter. If quality means value for money, then schedule and payment terms matter.

2. Finding and Instructing the Right Linguists

The next quality guardrail discussed was finding and instructing the right linguists.

This selection process is not random. It requires thinking about key elements such as language pairs, task type, location, and tools.

Instruction was framed more simply but critical. Linguists must receive clear info about tasks scope, volume, deadline, and payment, along with any additional context that affects execution.

Based on my own experience, this connects directly to the “doing it right the first time” definition of quality from Week 2. If instructions are incomplete or ambiguous, rework becomes almost inevitable. Quality failure in this stage often shows up later as endless revision cycles, not immediate errors or red flags.

3. Sanity Checking

One of the most practical discussions this week was around sanity checking. The quiz raised in class was whether we should simply flip the translation back to the client once it is done. The implied answer was clearly no.

Even if we cannot understand the target language, there are still many checks we can perform. For example: file type, formatting, layout, completeness, measurement units, number consistency, date and time formats, scientific names, and structural alignment, etc.

This part hints me that quality management is not limited to linguistic expertise. Through these procedural steps, many visible client-facing failures could have been caught up earlier.

4. Subjective and Objective Requirements

For me, this week’s discussion raised an interesting point about blurred boundaries between the basic six quality categories and whether this strict categorization is the goal.

I believe the real task here is identifying what is most important to the client and how to translate that into objective workflow deliverables.

End users often have their own subjective expectations, while people in the workflow must operate with objective, requirement-driven constraints. This feels like the core tension of quality management. The client might say, “I want it to feel premium.” The workflow needs something more concrete, such as tone guidelines, terminology preferences, or review thresholds. Our job is to bridge that gap.

Week 4

1. The Three Pillars of Quality Management

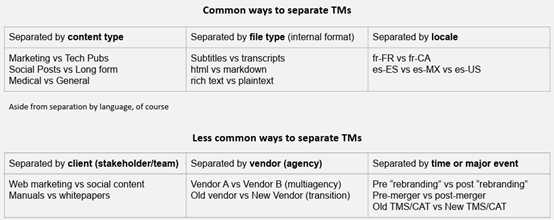

The core concept of this week focused on three pillars of localization, specifically Translation Memory, Termbase or Glossary, and Style Guide. Even though these all felt quite familiar, I realize there are still a few interesting aspects I hadn’t noticed before.

TM:

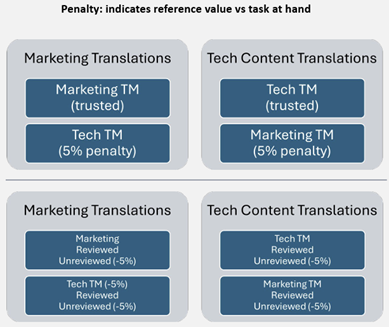

TMs are built from finalized source target pairs and stored for reuse. They are commonly separated by content type, file type, locale, client, vendor, or time. TMs can be penalized to reflect trust level or relevance to the current task.

Glossary:

Glossaries can be developed through three ways: client initial knowledge, initial content analysis, and work-in-progress. Terminology work needs coordination, feedback, and validation, often requiring access to “content specialists” on the client side.

Style Guide:

For style guides, the points are more pragmatic. What you can build depends on what you have on hand, what the client has, and how willing they are to answer questions. Localization style guides often govern translation quality rather than content creation, so certain content creation guardrails can be dropped in many cases.

2. Reuse Strategy

One point Harry put forward resonates deeply in me: TMs are not inherently good, their value depends on provenance, review status, and relevance to the content at our hand. That is why separation, sequencing, and penalties exist. They are governance mechanisms for trust. Reuse is powerful, but blindly trusting them can occasionally create systematic errors at scale.

WEEK 5

1. The TEP Process

The TEP process: Translation, Editing, and Proofreading. The workflow is linear. Work moves forward from one person to the next, and the assumption is that each step improves the output.

But here comes the question: this classic process depends heavily on one big assumption: the next person is more qualified and will not make the translation worse.

What if it isn’t?

2. The Hidden Risk (When the Reviewer Is Not Better Than the Translator)

In typical workflows, it is totally possible that the reviewer is less capable than the translator. As a PM assigning roles, you may not actually know who is stronger on a given domain, language pair, or content type. This breaks the default logic of TEP.

3. The Consensus-Building Workflow

The alternative workflow discussed was Translation, Review, Implementation, with arbitration as the mechanism that turns disagreement into a final decision. In this workflow, the reviewer primarily suggests changes instead of directly overwriting the translation. If there is disagreement, the translator can defend choices and the reviewer vice versa. Only mutually agreed changes are implemented in the end.

This feels much more robust.

4. Speed, Cost, and the Tradeoff

Here comes the operational tradeoffs. TEP is typically cheaper and faster, and in theory it can be done with one person at minimum. The TRI workflow is typically slower and more expensive because it requires at least two people and usually generates friction or back-and-forth.

So, this then end up more like a business decision. Different content types and risk levels justify different workflows.

5. Feedback Loops

In quality management, if you want long-term improvement and consistency, you need a feedback mechanism that does not rely on extra manual effort.

In TEP, feedback may require separate efforts to send changes back as an extra process. In the TRI workflow, the translator learns as part of the process because they are actively involved in resolving suggestions and disagreements.

6. The Practical Implication of ISO 17100

We also reviewed ISO 17100 and its requirement that translations be revised by a second person. Harry highlighted that a PM sanity check does not count toward this requirement because PMs are typically not capable of revising the text linguistically. This helped connect workflow choices to client expectations. Some clients may not ask for ISO explicitly, but if they operate in regulated or procurement-heavy environments, they may assume it.

In my opinion, this is a good reminder that quality standards are not just about language. They are also about process evidence. In some contexts, the workflow itself is part of the deliverable.

WEEK 6

1. Last week’s QA checker exercise

This week started with a quick reflection on last week’s QA checker exercise. One point Harry emphasized was that most automated QA systems are designed to catch mechanical issues such as punctuation, number mismatches, missing tags, spacing issues, or formatting inconsistencies. But these systems are not built to evaluate meaning, tone, or contextual accuracy. In other words, they help detect technical problems, not linguistic quality.

In practice, automated QA works best when it focuses on predictable and repeatable checks.

2. The Calibration Problem

Another issue then came up. QA tools sometimes flag things that are not actually errors. When that happens repeatedly, the tool stops being helpful and starts slowing reviewers down. This then becomes a calibration problem. If the checker is too strict, reviewers waste time investigating harmless flags. If it is too loose, real issues slip through.

3. The Cost of Prevention

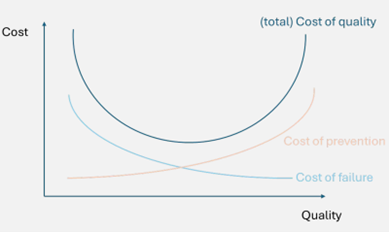

The main concept this week was the cost of quality, starting with cost of prevention. Prevention includes all the steps taken before delivery to reduce the chance of errors, such as better translators, additional review processes, clearer instructions, or more robust workflows.

Quality improvement is not linear. Early improvements are relatively cheap and produce significant gains. But as we push toward near perfect output, the marginal cost rises quickly.

4. The Cost of Failure

Unlike prevention cost, failure cost depends on what errors slip through, whether the client notices, and how they react.

Even when a review stage exists, reviewers do not catch everything. Some errors inevitably reach the client. The outcome might be endless rework, complaints, or a more serious loss of trust.

This is like rolling a dice. If you roll the dice once, you might get lucky. But if you repeat the same risky setup over and over again, eventually the bad outcome becomes almost guaranteed.

This framing helped me see prevention spending differently. It is not just about improving quality. It is also about reducing uncertainty.

5. “Internal” vs “External” Failures

Internal failures are issues caught before delivery. They create rework, delays, or additional reviewer effort, but the client never sees them.

External failures are issues caught after delivery. These include client complaints, visible mistakes, brand damage, or even loss of contracts.

6. High Risk Content

High risk content includes health and safety information, high visibility marketing materials, or anything tied directly to brand reputation. Low risk content might include informational text that few users will ever read.

This brings me back to an earlier topic from the course. Quality is always tied to purpose. The appropriate workflow depends on the potential impact of failure.

WEEK 7

1. TQA & LQA

If the work happens inside the translation process, we can think of it as TQA. If it happens outside the translation process but is still quality-related, we can think of it as LQA. More broadly, both still sit under QA.

2. Quality Mgmt Does Not Happen Only in the CAT Tool

When we are aligning with the client, forming the SoW, or clarifying expectations, we are usually working through email, Zoom, or other communication channels, not inside Trados or any CAT environment.

3. Localizability Check

We also touched on localizability checks, and I found that discussion especially interesting. One classmate pointed out that localizability is often more important closer to content creation, when there is still room to influence how the content is written. Harry suggested that this kind of thinking belongs more toward the front of the process, when we are still trying to understand what the client is trying to accomplish. This made a lot of sense to me. If content is already finalized, a localizability check becomes more limited. At that point, we may still catch roadblocks or things that do not need to be translated, but we have less power to fix the root issue. So this is another reminder that strong quality management is often about moving key checks earlier.

4. People who might perform LQA tasks

Program-internal team members, independent QA teams, client-preferred third parties (incl. QA vendor), client employees, localization experts, and non-localization laypeople.

This stood out to me because it makes LQA feel much more multi-dimentional. LQA can happen closer to final delivery and can involve people with very different forms of expertise and very different expectations.

For me, this is the most practical takeaway of the week. Not all reviewers are reviewing for the same reason. A linguistic expert may focus on terminology or accuracy. A client employee may care more about business purpose. A non-localization layperson may react more like an actual end user. That means the review setup itself needs to be intentional.

WEEK 8 SPRING BREAK

WEEK 9

1. Multiple LSP setup

Single vendor risk: If the vendor suddenly becomes unavailable, gets acquired, or simply underperforms, the client has very little flexibility.

Therefore, a multiple LSP setup becomes a useful business strategy. We often talk about quality as if it only lives inside the translation itself, but this discussion reminded me that resilience is also part of quality management.

2. Different vendors can be assigned by workflow, not just by language

This part was pretty familiar to me based on my previous LPM work, so I will not spend too much time documenting that piece here.

3. Upstream problems can cause downstream quality problems

In class, there was a discussion around a classic example: Spanish for Spain versus Latin American Spanish. What stood out to me was that before we even talk about quality, we first need the right market and locale structure in place.

If the scope is defined too broadly or the language buckets are not set up correctly, review conflicts will almost inevitably show up later. A vendor may technically cover “Spanish”, but that does not automatically mean they are the right fit for every regional variant.

To me, this remind me a point from earlier weeks: many downstream quality issues are actually caused by upstream scoping decisions.

4. Agencies can learn from each other

One of the interesting parts in today’s class was the point that agencies can actually learn from each other in a multi-agency setup. In this situation, the setup can serve as a control mechanism. In the best case, it can create a strong ecosystem.

But that only works if the client and vendors are coordinating well.

From my perspective, this is where quality management becomes deeply operational. Someone still needs to define boundaries, clarify responsibilities, and make sure handoffs do not create confusion or extra efforts.

5. Things to watch out for in a multi-agency setup

- Segregate and anonymize your non-employees

- Don’t unnecessarily share technology

- Don’t unnecessarily divulge internal processes / divisions

- Never share financial information

If one vendor has too much visibility into another vendor’s business details or internal info, that can create tension and distrust that damage collaboration

WEEK 10

1. Why LQA metrics exist

This week focused on LQA metrics, but what stood out to me first was not the metrics themselves, but why we need them.

As localization work scales, more teams get involved and more aspects of quality need to be evaluated. Without a shared framework, different reviewers may focus on different things (terminology, style, fluency, formatting, etc.) and end up talking past each other.

So in this case, LQA metrics are less about scoring translation, and more about creating a common language for quality across teams.

2. MQM & DQF

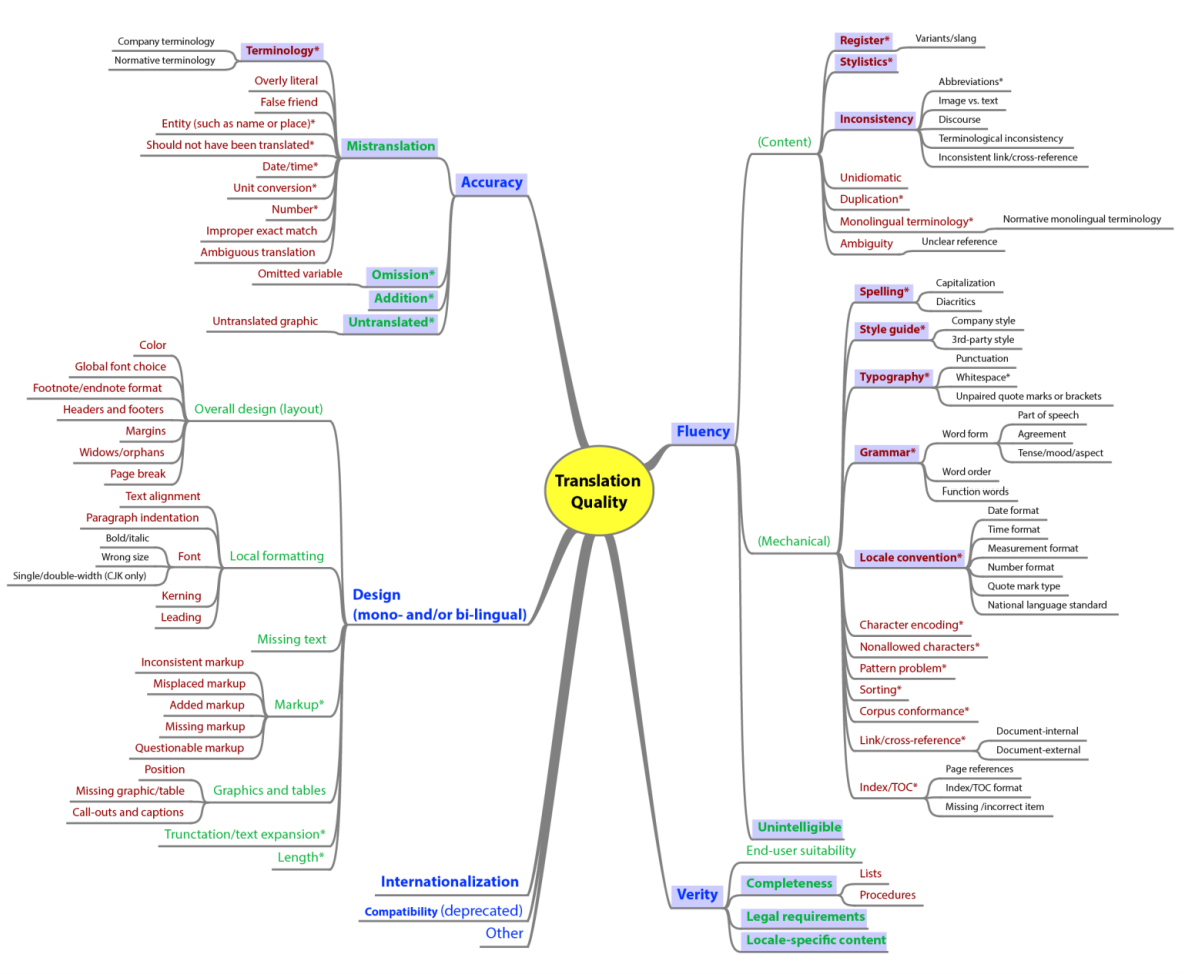

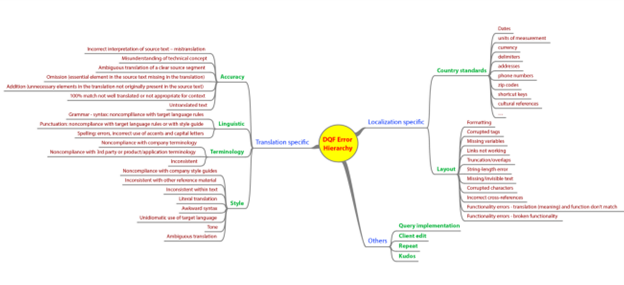

We then looked at MQM and DQF, which are two major frameworks in the industry.

What helped me most was Harry’s explanation of how these two systems differ in emphasis. MQM is extremely broad and can capture many dimensions, such as accuracy, design, fluency, terminology, locale convention, and more. DQF, meanwhile, organizes quality concerns in a way that feels somewhat closer to common localization practice, including translation-specific and localization-specific issues.

3. Categorize, not scoring

One of the points Harry brought about today truly clicked me.

People are bad at assigning direct numeric scores consistently, but much better at categorizing problems.

So instead of asking reviewers to decide whether an error is a 2, a 4, or a 7, the framework asks them to classify it into a small number of severity buckets. The score is then calculated from those categories.

- Minor means the issue is noticeable but does not affect comprehension.

- Major means it disrupts understanding and forces the reader to stop or reread.

- Critical means it defeats the purpose of the translation altogether.

4. Weighting depends on goals

The last piece of our jigsaw was weighting.

Not all issue types matter equally. Depending on the content, some categories should carry more weight than others. For example, in technical content, accuracy may matter more than style. In marketing content, tone and fluency may matter more.

WEEK 11

1. LQA vs product testing

LQA checks the language, but product testing checks whether the product actually works. Both are required, but they operate at different layers.

2. Keep up with the development cycle

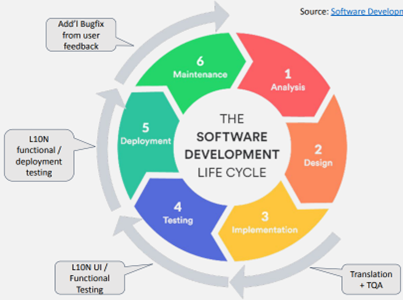

We looked at different software development models, from linear SDLC to Agile and DevOps.

The key takeaway here is that localization does not operate independently. As development cycles become faster and more iterative, localization is expected to move at the same pace.

For example, in an Agile setup, updates happen in short cycles, which means localization and testing also need to happen continuously rather than as a final step.

3. “Internationalization” vs “just translating the product”

One comparison in class that seems interesting to me was the difference between treating internationalization as a core product strategy versus treating it as an afterthought.

In one scenario, international needs are considered early in planning, design, and implementation. In the other, the product is built for the source market first, and localization is added later as a patch.

The downstream impact is significant. If localization is treated as an add-on, testing is often reduced to “if it runs, it’s good enough,” and many issues are classified as minor.

4. Different types of localization testing

We also went through several types of localization testing, and this helped clarify what LSP-side testing typically focuses on.

Some key categories:

- UI testing, to ensure layouts and text display correctly

- Functional testing, to confirm features still work after localization

- Deployment testing, to verify the product runs in local environments

- Usability testing, to check whether the product meets local user expectations

Not all testing needs to be done by the same team. LSPs often focus on UI and linguistic aspects, while deeper functional testing may remain on the client side.

5. Use checklists

The last part of the session focused on checklists, which felt very familiar to me as what I’ve done during my last job.

From my own experience, building a structured spreadsheet or bug checklist and providing clear, well-organized information and datasets not only helps developers quickly reproduce issues and resolve them more efficiently, but also makes the impact of localization work more visible to the product team.

When issues are documented in a consistent and structured way, it becomes easier for stakeholders to see patterns, understand root causes, and recognize that many problems are not isolated translation errors but product-level issues. This, in turn, helps position the localization team as a necessary part of the overall product quality process rather than just a downstream service.